Field robots are already at work in areas such as mining, agriculture, and environmental monitoring. Future applications will require larger-scale systems with multiple robots that can work autonomously in difficult conditions that push the limits of sensing, perception, planning, and communication. This talk will focus on coordinated object perception in outdoor environments and discuss how occlusions and sensor characteristics limit detection and classification accuracy, how to reduce uncertainty by combining observations from carefully chosen viewpoints, and how motion planning and mobility can play a major role in addressing these challenges through an active perception approach. The talk will give an overview of our research in developing new methods for active perception in decentralised teams that integrates ideas from perception and planning, information theory, and game AI to allow robots to coordinate their behaviour in real time. We have implemented our methods in physical systems with wireless communication, and the talk will describe several examples of multi-robot systems that search realistic environments to detect and classify objects. The talk will also look towards future systems and discuss key open challenges at the frontier of mobility, sensing, and communication.

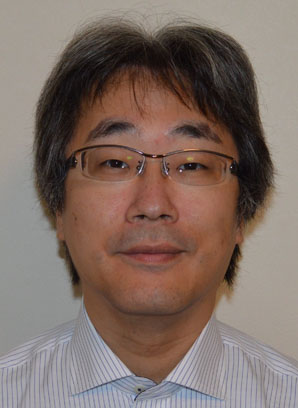

Social robots are coming to appear in our daily lives. Yet, it is not as easy as one might imagine. We developed a human-like social robot, Robovie, and studied the way to make it serve for people in public space, such as a shopping mall. On the technical side, we developed a human-tracking sensor network, which enables us to robustly identify locations of pedestrians. Given that the robot was able to understand pedestrian behaviors, we studied various human-robot interaction. We faced many of difficulties along the way. For instance, the robot failed to initiate interaction with a person, and failed to coordinate with environments, like causing a congestion around it. Toward solving these problems, we have developed various human interaction models. Such models enabled the robot to better serve individuals, and also helped us to understand people’s crowd behavior, such as congestion around the robot. I plan to talk about a couple of studies along this line, and some of the successful services provided by the social robot in the shopping mall, hoping to provide an insight about how social robots in public space will look like in the near future.